a case for open source

As artificial intelligence transitions from an emerging technology to the foundational infrastructure of global civilization, its governance is being shaped by two converging forces. This paper advances a policy-theoretical argument that open-source AI can serve as a democratizing counterweight to concentrating dynamics under appropriate governance conditions.

00

problem

As artificial intelligence becomes the foundational infrastructure of global civilization, control over its development is concentrating in a handful of corporations and states — creating conditions in which the cognitive systems that shape knowledge, decisions, and public life are governed by actors accountable to neither democratic publics nor the communities they affect.

solution

The paper proposes treating foundational AI capabilities as a public commons governed by distributed, multistakeholder institutions — drawing on Elinor Ostrom's commons governance framework and grounded in emerging legislative models such as Brazil's State Complementary Law No. 205. It introduces "mutually assured transparency" as a novel concept for how open-source ecosystems can dampen the most dangerous dynamics of geopolitical AI competition by making capabilities legible to all actors without requiring the bilateral trust that formal arms control demands.

Abstract

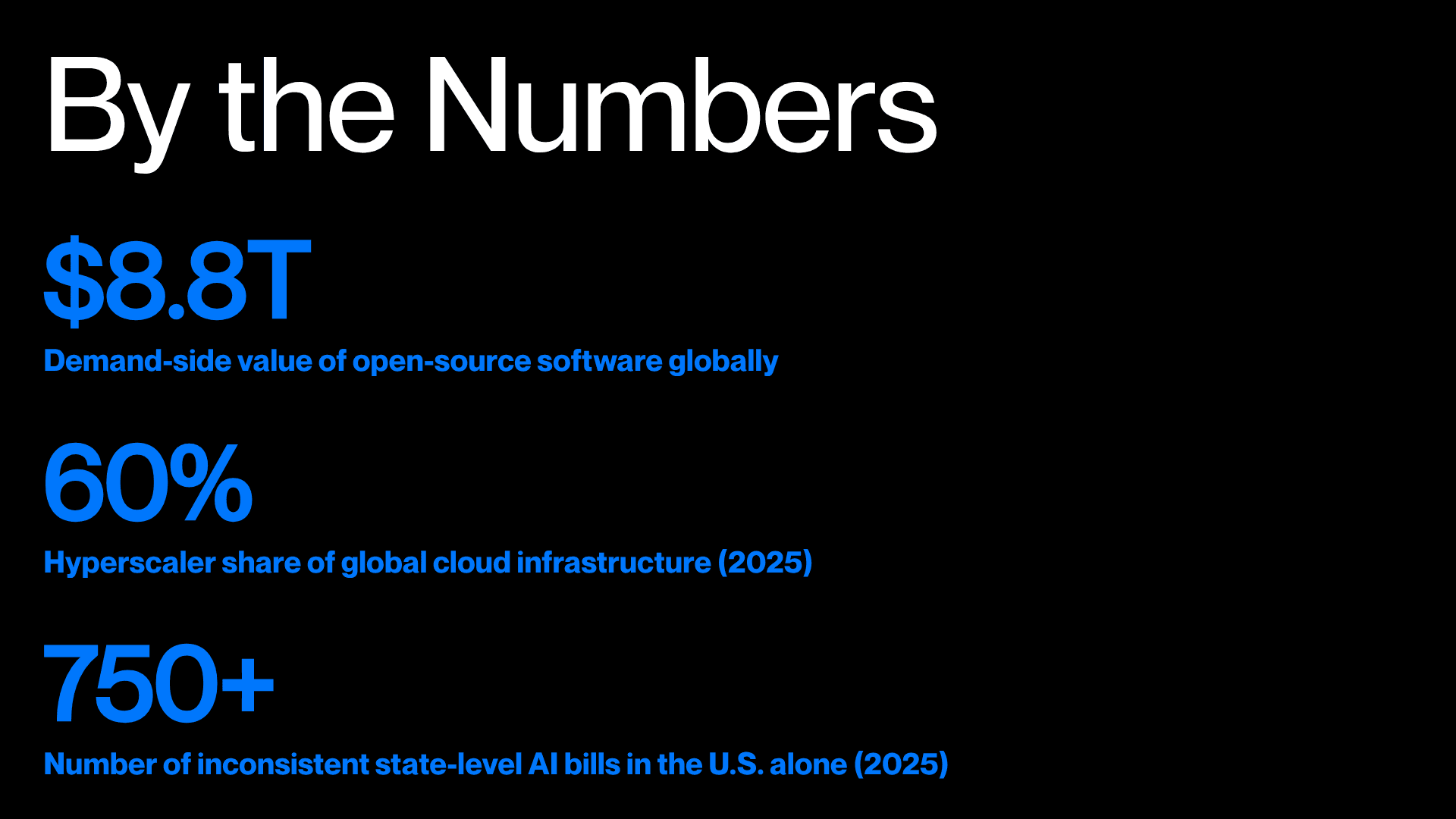

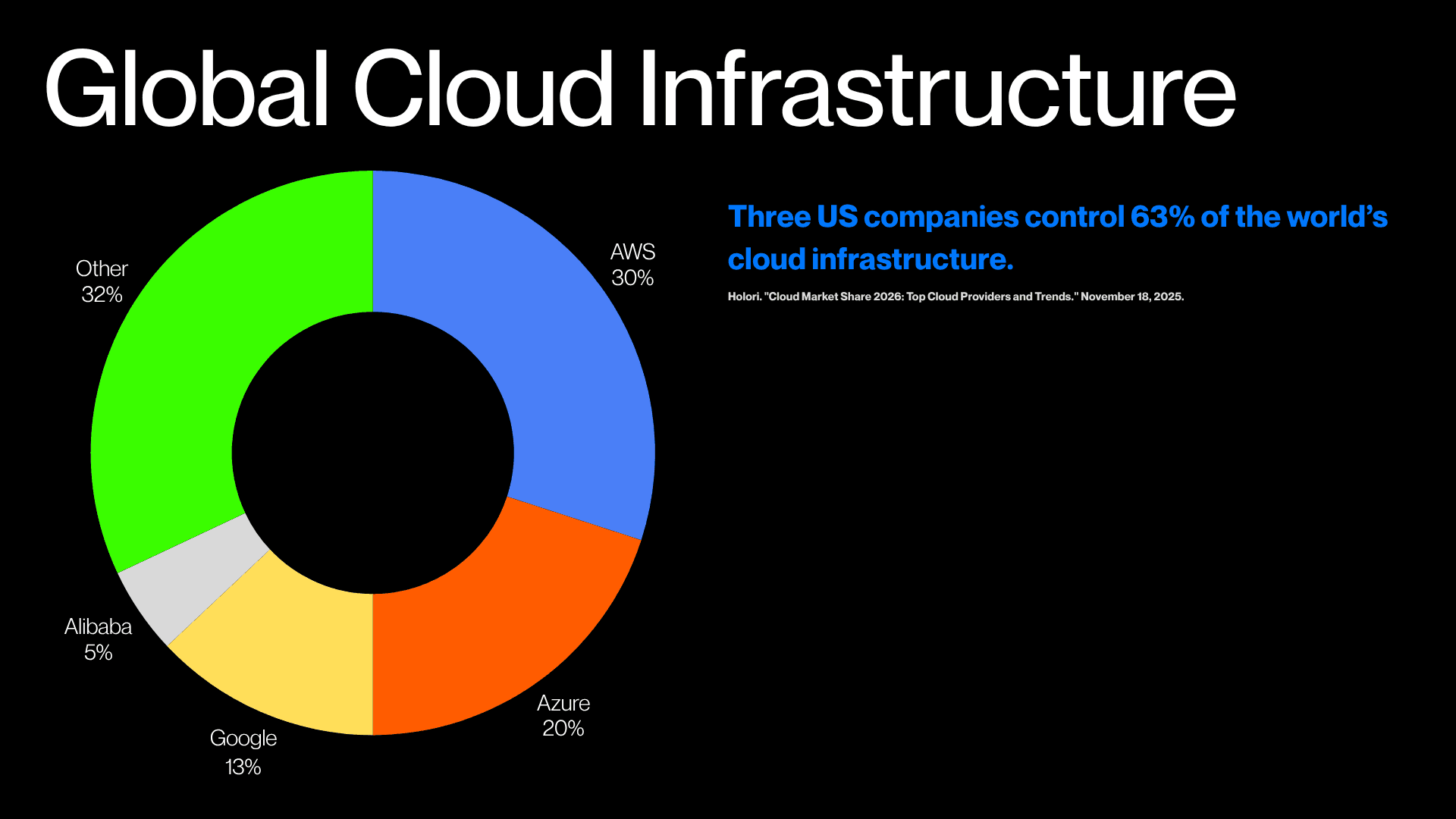

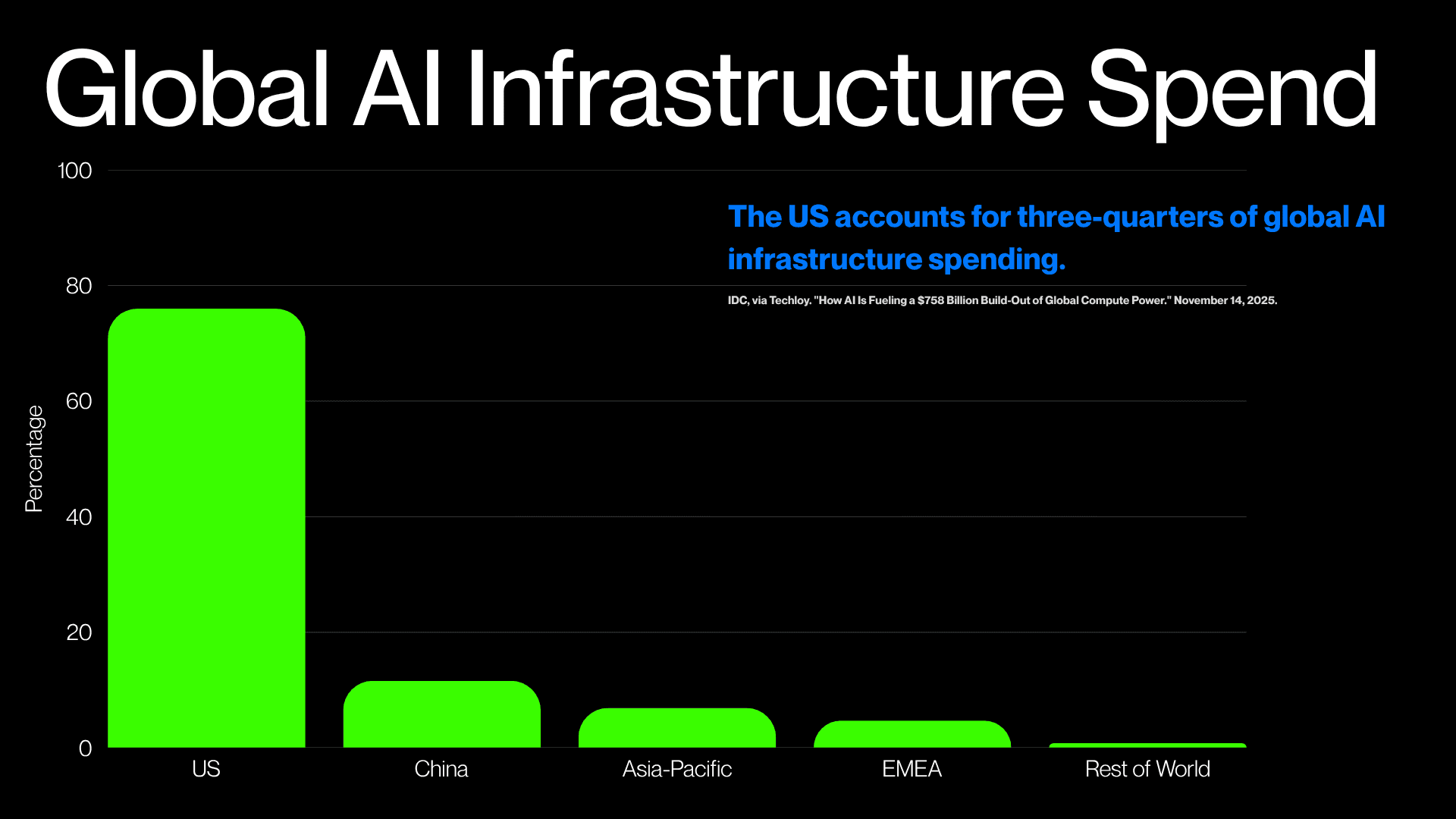

As artificial intelligence transitions from an emerging technology to the foundational infrastructure of global civilization, its governance is being shaped by two converging forces: an intensifying geopolitical rivalry between the United States and China, and an unprecedented concentration of compute, data, and model capability in a small number of corporate actors. Three hyperscale cloud providers control over 60 percent of the global cloud infrastructure market. The semiconductor stack that underlies AI training concentrates critical bottlenecks—advanced GPUs, EDA tools, lithography equipment, HBM memory, and advanced packaging—in a handful of firms and states. Harvard Business School research estimates that open-source software creates $8.8 trillion in demand-side economic value globally, constituting what economists call “digital dark matter”—non-pecuniary inputs into production that standard GDP measurement cannot capture.

This paper advances a policy-theoretical argument that open-source AI can serve as a democratizing counterweight to these concentrating dynamics under appropriate governance conditions. Drawing on commons governance theory pioneered by Elinor Ostrom and extended to knowledge domains, the paper proposes that treating the foundational layers of AI as a public commons rather than a proprietary asset can mitigate technological authoritarianism—a condition in which the cognitive infrastructure of civilization is governed by actors accountable neither to a democratic public nor to the communities they affect. The paper further introduces the concept of “mutually assured transparency”: the argument that open-source AI ecosystems create structural conditions of mutual visibility among competing states and firms, dampening the asymmetric and opaque dynamics that fuel the most dangerous escalatory behavior in AI competition. Importantly, this transparency operates primarily on capability legibility—what models can do—rather than governance legibility—what values they encode. The concept is tested against its strongest complication—China’s simultaneous pursuit of aggressive open-source release and mandatory ideological alignment under its Interim Measures for Generative AI—and a governance framework is proposed that draws on emerging legislative models, including Brazil’s pioneering State Complementary Law No. 205.

"The question is not only who builds the best models but who governs the cognitive infrastructure of the twenty-first century."

The Argument in Three Moves:

AI is becoming infrastructure, and infrastructure concentration is power concentration.

Open-source ecosystems redistribute that power by making capabilities transparent, forkable, and auditable.

This transparency creates a geopolitical stabilizing effect — not by ending competition, but by reducing the opacity that makes competition most dangerous.

year

2026

timeframe

Ongoing

category

Research